Towards Magnanimous AGI

A neuroscience-first strategy for aligning advanced AI and the teams executing it

The design space of general intelligence is vast, and we have no idea how much of it is safe beyond the narrow region that we humans inhabit. We are racing towards Artificial General Intelligence (AGI) without building the guardrails necessary to prevent catastrophic risk. Because the human brain is the only broadly cooperative general intelligence we know of, it is our best reference point. So why hasn’t neuroscience impacted AI safety yet?

We met with hundreds of neuroscientists to find out. What we learned is that there is a clear path to safer AI through insights from the brain, but it requires an unconventional bet: we must actively accelerate neuroscience. This is the core of defensive accelerationism, a strategy to reduce systemic risks by selectively accelerating beneficial innovations to outpace harmful ones. Our position is that neuroAI is among the most important investments for AI safety, and that we can extract these insights fast enough to matter, even on relatively short AGI timelines.

Closing the Loop: Aligning Neuroscience with AI Timelines

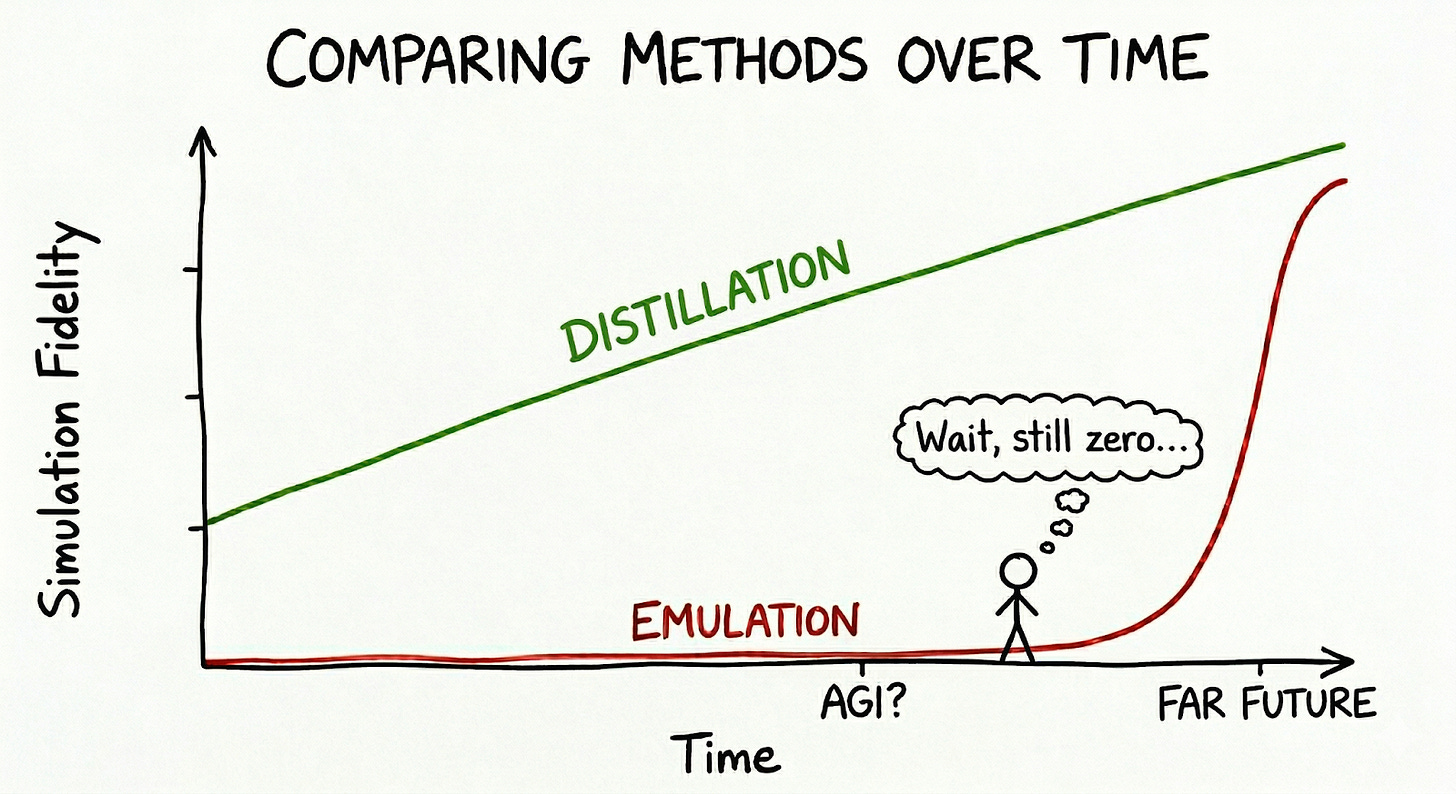

A common objection to a brain-inspired path to AI safety is that neuroscience moves too slowly to keep pace with rapid AI development. However, our work in frontier biology has taught us that you can dramatically increase the speed of discovery by building well-specified virtual models that allow for high-throughput testing of hypotheses. In the context of neuroAI, there are effectively two brain modeling strategies: emulation and distillation. Emulation aims to copy the brain’s precise biological structure, while distillation extracts its underlying rules and algorithms. These two approaches have fundamentally different development trajectories.

The Emulation Path: High Reward, Long Horizon

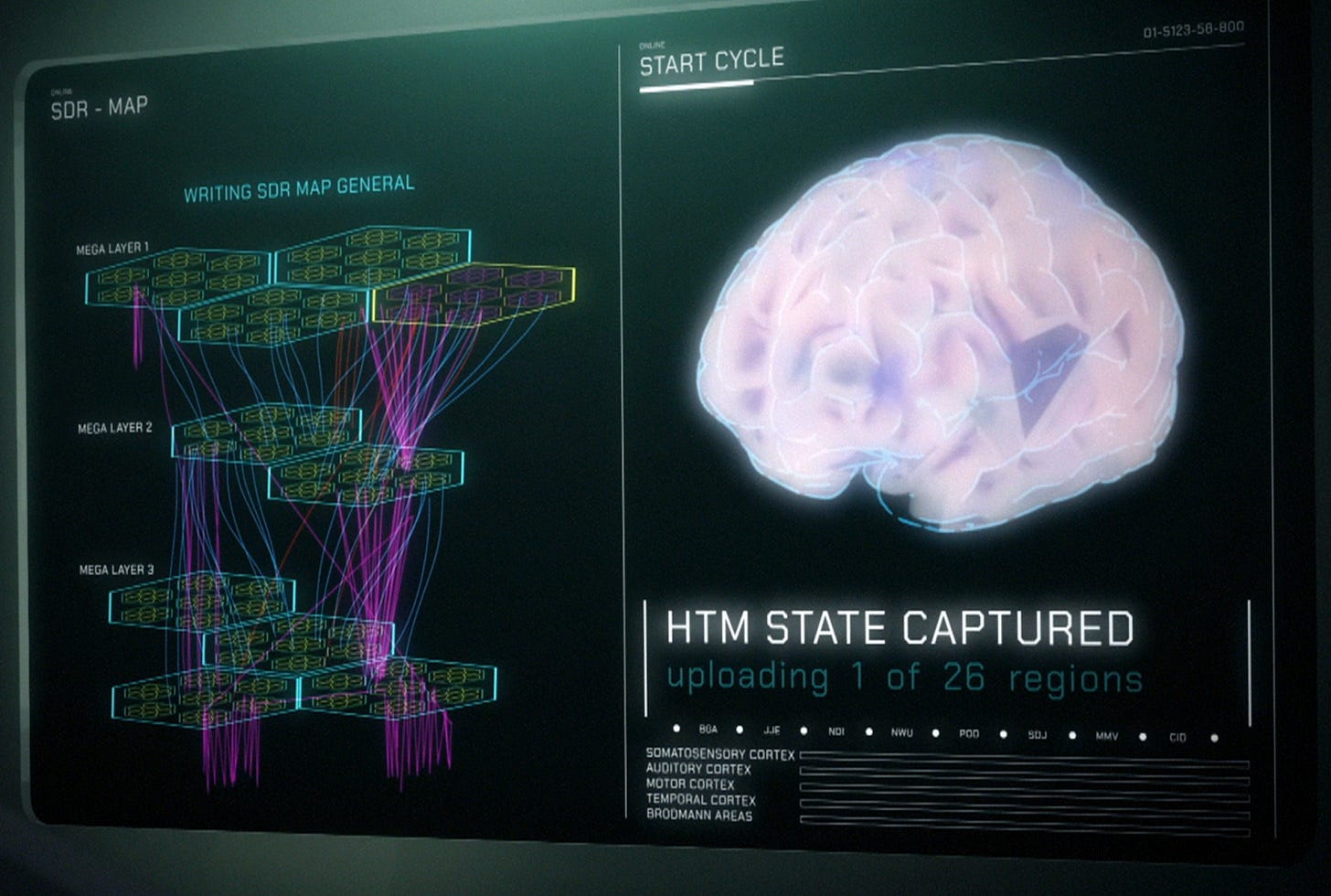

The emulation path is a mainstay of classic AI safety literature (e.g. Sandberg & Bostrom 2008, Bostrom 2014). The basic idea is straightforward: we scan the human brain with high-resolution microscopes to obtain a map of the brain – a connectome. Then, we emulate this scan in silico to create a biophysical simulation of a brain. This would allow us to run infinite experiments to understand human intelligence and our prosocial instincts. It would also allow us to spin up huge numbers of virtual researchers to solve aging, ASI alignment, and beyond.

We evaluated this idea rigorously. Alongside the Foresight Institute, we sponsored a workshop at Oxford, convening dozens of neuroscientists and tool developers. We spoke with leading AI safety researchers, and many of them view brain emulation, if realized, as the most powerful way to reduce risk.

We found a field experiencing a dramatic drop in data acquisition costs. In 2023, it was estimated that it would cost $15B for a single mouse connectome, but we’ve already seen rapid progress. Disruptive technologies, like E11 Bio’s protein labeling and Google Connectomics’s computer vision advances, could dramatically decrease this cost to below $1B over the next decade. This would be a remarkable achievement and accelerate neuroscience broadly. We’re glad to support E11’s work on this.

Yet, getting the connectome is only half the battle. The human brain is the most complex system humanity has ever attempted to simulate, and even a perfect structural map would not suffice for faithful emulation. We would still lack critical information about synaptic strengths, kinetics, and neuromodulatory states that actually drive the system’s behavior. Acquiring this calibration data will likely take over a decade. Barring a Manhattan-Project-style mobilization, the emulation path is unlikely to yield results in time for short AGI timelines.

The Distillation Path: Fast, Functional, and Attainable

The other path to leveraging the brain for AGI safety is distillation. To keep human societies stable and collaborative, specific aspects of cognition, decision-making, and computation are essential. The challenge is identifying them.

As researchers like Ilya Sutskever and Doris Tsao have warned, we should not merely emulate the brain, but rather extract the correct features from it. That is, distill its distributed representations and underlying algorithms from functional brain models.

There is much to learn from the brain’s representational structure: it is incredibly robust, generalizing and learning effectively with minimal data exposure. It demonstrates how sparse coding manifests as compressed, highly interpretable representations. Studying this could lead to new architectures that are more interpretable, which is one of the most important goals of AI safety.

Instead of simulating every neuron and synapse, we can train neural networks to replicate recorded neural responses, building highly accurate functional models of the brain (Tolias Lab, 2025). We can then dissect these systems in silico (Ganguli Lab, 2023) to deconstruct how the brain’s representations are structured and how they change. These functional simulations provide a sandbox for virtual experiments at scale, allowing us to test and generate hypotheses in silico before verifying them back in vivo. Extending this approach to circuits underlying emotion and social behavior could reveal the precise mechanics of how the brain encodes empathy, cooperation, and moral reasoning.

This is why we funded the Enigma Project at Stanford University and Metamorphic: an integrated, frontier research effort that co-designs experiments and modeling. Through Enigma, we built conviction that the data required to scale functional modeling is highly attainable on short timelines, based on two key insights:

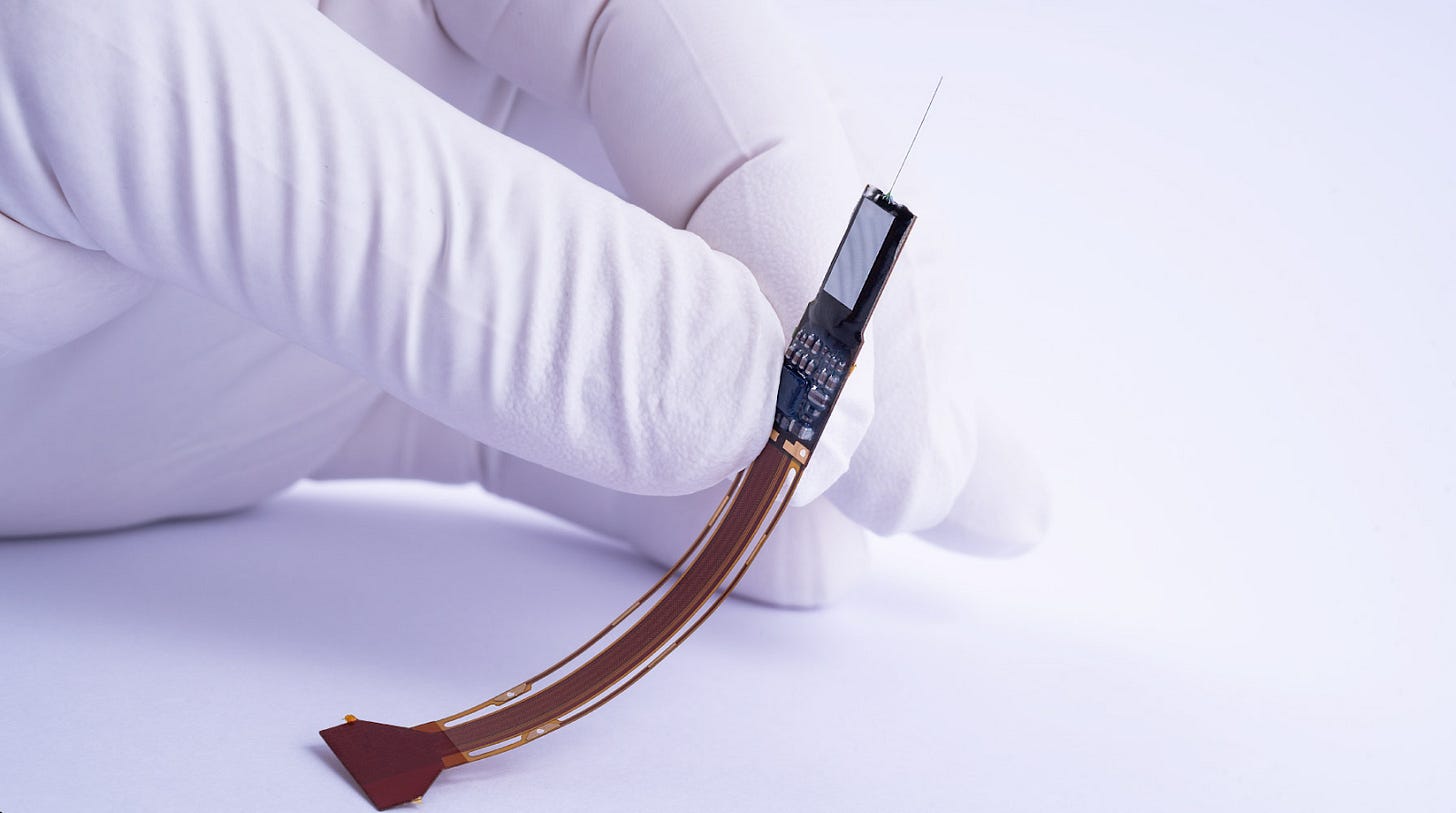

First, we don’t need to record every neuron! Sparse sampling theory (the JL lemma) proves that the geometry of neural representations is preserved even when subsampled. Because the brain is highly interconnected, meaningful low-dimensional structures can be recovered from surprisingly sparse recordings.

Second, the hardware is ready to scale. Neuropixels provide a well-laid technological path to dramatically increase representation fidelity. Our funding for Neuropixels will deliver significantly improved density of the previous generations and stands on the shoulders of a decade of previous work at IMEC and funding from their supporters.

Building a Deep Bench for AI Safety

Finally, why not just fund conventional AI safety research? While we strongly support traditional approaches, we believe unconventional research offers uniquely high leverage at this critical juncture.

Historically, safety research has mirrored the prevailing AI paradigms of the day. In 2014, the field implicitly assumed Reinforcement Learning was the sole path to AGI. Today, safety efforts are heavily indexed on LLMs and AI agents built on top of them. However, even if LLMs cross the threshold to AGI, they will not be the final stop in the evolutionary lineage of AI systems. Detailed AI projection scenarios (e.g. AI 2027) assume that the first AGI systems will be used to create the next generation of more efficient AGI, likely based on entirely different principles and architectures. The first general intelligence will neither be the last, nor the best.

To secure these new AI systems with unforeseen capabilities, the brain offers foundational safety principles that can guide their architectural design. In any conceivable scenario, the human brain remains the ultimate alignment target. Put simply: you cannot align a system to human values without fundamentally understanding how those values are implemented.

Join the Frontier

The window to align AGI is closing, but the tools to map and understand the only working model of safe general intelligence are scaling rapidly. We are actively deploying capital and building teams to accelerate this future.

Metamorphic is developing large-scale foundation models trained on rich, continuous neural data — high-resolution brain models at a scale never before possible. They are looking for research engineers and scientists to join their growing AI research team.

E11 Bio is recruiting at multiple levels, including for an experienced operations lead – if you’re excited about connectomics as a high-leverage path to AI safety and possess exceptional talent, integrity, and innate motivation, check out their open positions.

Constellation is looking for passionate operations, ML engineering, and post-training experts to develop their foundation model of human state.

Many thanks to Sophia Sanborn, Andreas Tolias, Adam Marblestone, Andrew Payne, and Grant Hummer for feedback and review

It's somewhat contradictory pivoting from deep mapping - assuming hardware will indeed teach us about software - to a distillation compromise. Moreover, acquiring the connectome might be very much less than "only half the battle", if one looks beyond the cellular level. If I'm right in synthesizing current neurobiology and concluding LLMs are really but an "externalized cerebellum", then your mission statement truly is a compilation of multiple mismatches.

I see the alignment framing, but it seems like too many steps motivate fundamental research questions. how is the neuroscience funding landscape in general, compared to neuroscience for alignment, such as people studying critical questions--the redundancy of neurons, mathematical frameworks for neuroscience, etc